in the september of 2010 I had an amazing opportunity to be in a Blender team with Dalai Felinto and Mike Pan, to work along the artist Mehmet S. Akten (aka Memo) and the awesome team of Office Broomer and Radboud University.

"Cosmic Sensation is a project born out of an idea by Physics Professor Sijbrand de Jong, formerly at CERN, and currently Director of the Institute for Mathematics, Astrophysics and Particle Physics at Radboud University Nijmegen; his idea was to "Use cosmic rays to make music"..."

Briefly, we were making real-time visuals in Blender Game Engine that were triggered by cosmic rays that are forming in outer layers of Earth`s atmosphere as muons.

more info about the project:

http://www.experiencetheuniverse.nl/

http://www.msavisuals.com/cosmic_sensation

http://www.blendernation.com/2010/09/28/experiencing-cosmic-rays-with-blender-in-a-fulldome/

Labels

- shaders (26)

- Blender (10)

- Racing game (10)

- BGE Candy (5)

- DoF (5)

- 3d models (4)

- bokeh (4)

- volumetric (4)

- platformer game (3)

- sky (3)

- BGE Air Race (2)

- SSAO (2)

- raycasting (2)

- status (2)

- WebGL (1)

- stereo 3D (1)

- tron (1)

- tutorials (1)

- webcam (1)

June 13, 2011

June 11, 2011

GLSL raycasting volume clipping plane

I received an email from the director of Center for Advanced Brain Imaging Chris Rorden, PhD, who asked some help regarding clipping plane for a raycasting volume in GLSL. He is also the creator of the free MRIcroGL a program designed to display 3D medical imaging using computer's graphics card.

As I had no experience with volumetrics and raycasting, in the (helping) process I learned a lot of how this stuff actually works.

So together we came up with this cool GLSL volume clipping method (Chris did the most of it hehe). Chris also suggested that the solution would be open source, so I could share it on my blog to help others.

I am not sure if we were not reinventing the wheel here, but I could not find "shader based clipping plane thing" in the web anywhere.

so the fragment shader goes like this:

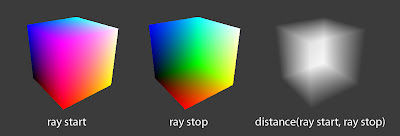

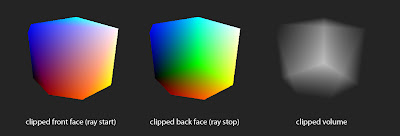

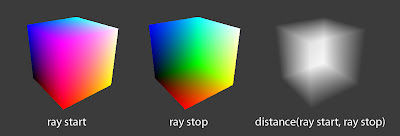

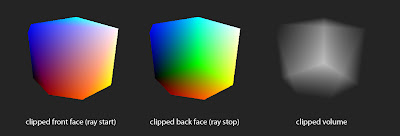

here are some illustrations:

volume clipping with azimuth 124°, elevation -44° and clipPlaneDepth 0.2 values

and a video of it in action

As I had no experience with volumetrics and raycasting, in the (helping) process I learned a lot of how this stuff actually works.

So together we came up with this cool GLSL volume clipping method (Chris did the most of it hehe). Chris also suggested that the solution would be open source, so I could share it on my blog to help others.

I am not sure if we were not reinventing the wheel here, but I could not find "shader based clipping plane thing" in the web anywhere.

so the fragment shader goes like this:

uniform float azimuth, elevation, clipPlaneDepth; //clipping plane variables

uniform bool clip; //clipping on or off

varying vec3 pos; //gl_Vertex.xyz from vertex shader

// Single-Pass Raycasting at The Little Grasshopper

// http://prideout.net/blog/?p=64

struct Ray

{

vec3 Origin;

vec3 Dir;

};

struct AABB

{

vec3 Min;

vec3 Max;

};

bool IntersectBox(Ray r, AABB aabb, out float t0, out float t1)

{

vec3 invR = 1.0 / r.Dir;

vec3 tbot = invR * (aabb.Min-r.Origin);

vec3 ttop = invR * (aabb.Max-r.Origin);

vec3 tmin = min(ttop, tbot);

vec3 tmax = max(ttop, tbot);

vec2 t = max(tmin.xx, tmin.yz);

t0 = max(t.x, t.y);

t = min(tmax.xx, tmax.yz);

t1 = min(t.x, t.y);

return t0 <= t1;

}

//polar to cartesian coordinates

vec3 p2cart(float azimuth,float elevation)

{

float pi = 3.1415926;

float x, y, z, k;

float ele = -elevation * pi / 180.0;

float azi = (azimuth + 90.0) * pi / 180.0;

k = cos( ele );

z = sin( ele );

y = sin( azi ) * k;

x = cos( azi ) * k;

return vec3( x, z, y );

}

void main()

{

vec3 clipPlane = p2cart(azimuth, elevation);

vec3 view = normalize(pos - gl_ModelViewMatrixInverse[3].xyz);

Ray eye = Ray( gl_ModelViewMatrixInverse[3].xyz, normalize(view) );

AABB aabb = AABB(vec3(-1.0), vec3(+1.0));

float tnear, tfar;

IntersectBox(eye, aabb, tnear, tfar);

if (tnear < 0.0) tnear = 0.0;

vec3 rayStart = eye.Origin + eye.Dir * tnear;

vec3 rayStop = eye.Origin + eye.Dir * tfar;

// Transform from object space to texture coordinate space:

rayStart = 0.5 * (rayStart + 1.0);

rayStop = 0.5 * (rayStop + 1.0);

vec3 dir = rayStop - rayStart;

float len = length(dir);

dir = normalize(dir);

//now comes the clipping

if (clip)

{

gl_FragColor.a = 0.0; //render the clipped surface invisible

//gl_FragColor.rgb = vec3(0.0,0.0,0.0); //or render the clipped surface black

//next, see if clip plane faces viewer

bool frontface = (dot(dir , clipPlane) > 0.0);

//next, distance from ray origin to clip plane

float dis = dot(dir,clipPlane);

if (dis != 0.0 ) dis = (-clipPlaneDepth - dot(clipPlane, rayStart.xyz-0.5)) / dis;

if ((!frontface) && (dis < 0.0)) return;

if ((frontface) && (dis > len)) return;

if ((dis > 0.0) && (dis < len))

{

if (frontface)

{

rayStart = rayStart + dir * dis;

}

else

{

rayStop = rayStart + dir * dis;

}

dir = rayStop - rayStart;

len = length(dir);

dir = normalize(dir);

}

}

// Perform the ray marching

vec3 step = normalize(rayStop-rayStart) * stepSize;

float travel = distance(rayStop, rayStart);

for (int i=0; i < MaxSamples && travel > 0.0; ++i, rayStart += step, travel -= stepSize)

{

// ...lighting and absorption stuff here...

}

}

here are some illustrations:

volume clipping with azimuth 124°, elevation -44° and clipPlaneDepth 0.2 values

and a video of it in action

June 8, 2011

ROME "3 DREAMS OF BLACK"

ROME "3 DREAMS OF BLACK" is an interactive film/music clip powered by WebGL

The exciting part is that I got emailed by the lead developer Branislav Ulicny (Altered Qualia) to inform me that he ported my GLSL depth of field with bokeh filer to WebGL. And recently I found out it is being used in this project.

my involvment in video at 2:00 ;)

to see it yourself

go here:

www.ro.me

the tech behind it:

www.ro.me/tech

The exciting part is that I got emailed by the lead developer Branislav Ulicny (Altered Qualia) to inform me that he ported my GLSL depth of field with bokeh filer to WebGL. And recently I found out it is being used in this project.

my involvment in video at 2:00 ;)

to see it yourself

go here:

www.ro.me

the tech behind it:

www.ro.me/tech

May 18, 2011

Volumetric TimeLine

This is an old project.

Credit goes to Benoit Bolsee and Dalai Felinto for helping me.

I recently realised that Toneburst has actually made something very similar to this before called Time Cube

Credit goes to Benoit Bolsee and Dalai Felinto for helping me.

I recently realised that Toneburst has actually made something very similar to this before called Time Cube

May 4, 2011

Procedural Animation System video3 - IK

a third update for my 2D procedural bipedal locomotion system featuring Inverse Kinematics.

You can see that limbs don`t stretch anymore and animation looks a lot more natural now. Though at the moment the "walker" is oriented left only :P, and he walks now also backwards.

I will fix that.

You can see that limbs don`t stretch anymore and animation looks a lot more natural now. Though at the moment the "walker" is oriented left only :P, and he walks now also backwards.

I will fix that.

May 2, 2011

Procedural Animation System video2

here is a second update to my 2D procedural bipedal locomotion system for BGE.

Added foot (spheres) for the character so now I got basic walk/run cycle. The correct knee position is not yet implemented. What i have here at the moment is just a bezier curve drawn from hip to foot. so the white stick figure is a rough visual representation of how it may look in the future when it`s done.

Added foot (spheres) for the character so now I got basic walk/run cycle. The correct knee position is not yet implemented. What i have here at the moment is just a bezier curve drawn from hip to foot. so the white stick figure is a rough visual representation of how it may look in the future when it`s done.

May 1, 2011

first steps - 2D procedural bipedal locomotion

I am doing a fully procedural animation system for a platformer game. Here you can see an early work in progress concept for foot placement.

Yellow lines show player velocity and step height, green and red cubes represent footprint placing on ground.

more of this soon..

Yellow lines show player velocity and step height, green and red cubes represent footprint placing on ground.

more of this soon..

April 12, 2011

realistic skin shader

for about 2 weeks now I`ve been working on a human skin shader. As this is a paid job for medical simulation, it has to look as close as possible to real life. Though as this is a paid job it means that there will not be free code sample here yet. Shader consists of simple math so it runs really fast.

Features:

- Widely customizable (could be used for any material)

- Tangent-space normal mapping

- Supports up to 8 lights (OpenGL has 8 light limit)

- Spherical Harmonics lighting

- Advanced specular reflections with fresnel ramp

screenshots:

standart phong shading for comparison:

Features:

- Widely customizable (could be used for any material)

- Tangent-space normal mapping

- Supports up to 8 lights (OpenGL has 8 light limit)

- Spherical Harmonics lighting

- Advanced specular reflections with fresnel ramp

screenshots:

standart phong shading for comparison:

April 6, 2011

webcam head tracking using FaceAPI

FaceAPI is a real-time head-tracking engine which uses webcam input to acquire 3D position and orientation coordinates per frame of video. And it works!

I implemented it in Blender Game Engine for cockpit view of BGE Air Race game.

and here is how I got it working in BGE

1. Downloaded FaceAPI here: LINK

2. Downloaded FaceApiStreamer here: LINK

(exports 6 degrees of freedom head tracking to a UDP socket connection)

3. Acquire the values from FaceApiStramer in BGE with Python code like this (not sure if it is quite right, but it kinda works):

Here is a blend file: LINK

you will need FaceAPI instaled and FaceApiStreamer running in background.

I implemented it in Blender Game Engine for cockpit view of BGE Air Race game.

and here is how I got it working in BGE

1. Downloaded FaceAPI here: LINK

2. Downloaded FaceApiStreamer here: LINK

(exports 6 degrees of freedom head tracking to a UDP socket connection)

3. Acquire the values from FaceApiStramer in BGE with Python code like this (not sure if it is quite right, but it kinda works):

from socket import *

controller = GameLogic.getCurrentController()

own = controller.owner

if own["once"] == True:

# Set the socket parameters

host = "127.0.0.1"

port = 29129

addr = (host,port)

# Create socket and bind to address

GameLogic.UDPSock = socket(AF_INET,SOCK_DGRAM)

GameLogic.UDPSock.bind(addr)

GameLogic.UDPSock.setblocking(0)

own["once"] = False

GameLogic.UDPSock.settimeout(0.01)

try:

data,svrip = GameLogic.UDPSock.recvfrom(1024)

str = data.split(' ')

own['xPos'] = float(str[0])

own['zPos'] = float(str[1])

own['yPos'] = float(str[2])

own['pitch'] = float(str[3])

own['yaw'] = float(str[4])

own['roll'] = float(str[5])

except:

pass

Here is a blend file: LINK

you will need FaceAPI instaled and FaceApiStreamer running in background.

March 10, 2011

GLSL 8x8 Bayer matrix dithering

and here is 8x8 Bayer matrix dithering

based on this paper: http://www.efg2.com/Lab/Library/ImageProcessing/DHALF.TXT

Comparison screenshots

original gradient

8x8 dither

4x4 dither

2x2 dither

based on this paper: http://www.efg2.com/Lab/Library/ImageProcessing/DHALF.TXT

// Ordered dithering aka Bayer matrix dithering

uniform sampler2D bgl_RenderedTexture;

float Scale = 1.0;

float find_closest(int x, int y, float c0)

{

int dither[8][8] = {

{ 0, 32, 8, 40, 2, 34, 10, 42}, /* 8x8 Bayer ordered dithering */

{48, 16, 56, 24, 50, 18, 58, 26}, /* pattern. Each input pixel */

{12, 44, 4, 36, 14, 46, 6, 38}, /* is scaled to the 0..63 range */

{60, 28, 52, 20, 62, 30, 54, 22}, /* before looking in this table */

{ 3, 35, 11, 43, 1, 33, 9, 41}, /* to determine the action. */

{51, 19, 59, 27, 49, 17, 57, 25},

{15, 47, 7, 39, 13, 45, 5, 37},

{63, 31, 55, 23, 61, 29, 53, 21} };

float limit = 0.0;

if(x < 8)

{

limit = (dither[x][y]+1)/64.0;

}

if(c0 < limit)

return 0.0;

return 1.0;

}

void main(void)

{

vec4 lum = vec4(0.299, 0.587, 0.114, 0);

float grayscale = dot(texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy), lum);

vec3 rgb = texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy).rgb;

vec2 xy = gl_FragCoord.xy * Scale;

int x = int(mod(xy.x, 8));

int y = int(mod(xy.y, 8));

vec3 finalRGB;

finalRGB.r = find_closest(x, y, rgb.r);

finalRGB.g = find_closest(x, y, rgb.g);

finalRGB.b = find_closest(x, y, rgb.b);

float final = find_closest(x, y, grayscale);

gl_FragColor = vec4(finalRGB, 1.0);

}

Comparison screenshots

original gradient

8x8 dither

4x4 dither

2x2 dither

March 9, 2011

GLSL dithering

looking at previous post (crosshatch), it inspired me to take a deeper look into dithering algorithms. http://en.wikipedia.org/wiki/Dithering

After few minutes I came up with very simple b/w Bayer Ordered dithering. I am quite sure someone has done it before me, but it was a nice exercise for me.

and I just found a color Ordered dithering shader here (4x4 Bayer Matrix):

http://www.assembla.com/code/MUL2010_OpenGLScenePostprocessing/subversion/nodes/MUL%20FBO/Shaders/dithering.frag?rev=83

Comparison screenshots (click to see full size):

Without filter

B/W dithering

Color dithering

After few minutes I came up with very simple b/w Bayer Ordered dithering. I am quite sure someone has done it before me, but it was a nice exercise for me.

//Bayer Ordered dithering

uniform sampler2D bgl_RenderedTexture;

void main()

{

vec3 color = texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy).rgb;

vec3 luminosity = vec3(0.30, 0.59, 0.11);

float lum = dot(luminosity, color);

float v = gl_FragCoord.s;

float h = gl_FragCoord.t;

gl_FragColor = vec4(1.0, 1.0, 1.0, 1.0);

if (lum < 0.9)

{

gl_FragColor.rgb *= round((fract(v/2))+(fract(h/2)));

}

if (lum < 0.75)

{

gl_FragColor.rgb *= round((fract(v+v/2))+(fract(h+h/2)));

}

if (lum < 0.5)

{

gl_FragColor.rgb *= round((fract(v+v+v/2))+(fract(h+h/2)));

}

if (lum < 0.25)

{

gl_FragColor.rgb *= round((fract(v+v/2))+(fract(h+h+h/2)));

}

}

and I just found a color Ordered dithering shader here (4x4 Bayer Matrix):

http://www.assembla.com/code/MUL2010_OpenGLScenePostprocessing/subversion/nodes/MUL%20FBO/Shaders/dithering.frag?rev=83

// Ordered dithering aka Bayer matrix dithering

uniform sampler2D bgl_RenderedTexture;

float scale = 1.0;

float find_closest(int x, int y, float c0)

{

vec4 dither[4];

dither[0] = vec4( 1.0, 33.0, 9.0, 41.0);

dither[1] = vec4(49.0, 17.0, 57.0, 25.0);

dither[2] = vec4(13.0, 45.0, 5.0, 37.0);

dither[3] = vec4(61.0, 29.0, 53.0, 21.0);

float limit = 0.0;

if(x < 4)

{

limit = (dither[x][y]+1.0)/64.0;

}

if(c0 < limit)

{

return 0.0;

}else{

return 1.0;

}

}

void main(void)

{

vec4 lum = vec4(0.299, 0.587, 0.114, 0.0);

float grayscale = dot(texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy), lum);

vec3 rgb = texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy).rgb;

vec2 xy = gl_FragCoord.xy * scale;

int x = int(mod(xy.x, 4.0));

int y = int(mod(xy.y, 4.0));

vec3 finalRGB;

finalRGB.r = find_closest(x, y, rgb.r);

finalRGB.g = find_closest(x, y, rgb.g);

finalRGB.b = find_closest(x, y, rgb.b);

float final = find_closest(x, y, grayscale);

gl_FragColor = vec4(finalRGB, 1.0);

}

Comparison screenshots (click to see full size):

Without filter

B/W dithering

Color dithering

crosshatch shader

found a nice crosshatch shader

http://learningwebgl.com/blog/?p=2858

http://learningwebgl.com/blog/?p=2858

//Source

//http://learningwebgl.com/blog/?p=2858

uniform sampler2D bgl_RenderedTexture;

void main()

{

float lum = length(texture2D(bgl_RenderedTexture, gl_TexCoord[0].xy).rgb);

gl_FragColor = vec4(1.0, 1.0, 1.0, 1.0);

if (lum < 1.00) {

if (mod(gl_FragCoord.x + gl_FragCoord.y, 10.0) == 0.0) {

gl_FragColor = vec4(0.0, 0.0, 0.0, 1.0);

}

}

if (lum < 0.75) {

if (mod(gl_FragCoord.x - gl_FragCoord.y, 10.0) == 0.0) {

gl_FragColor = vec4(0.0, 0.0, 0.0, 1.0);

}

}

if (lum < 0.50) {

if (mod(gl_FragCoord.x + gl_FragCoord.y - 5.0, 10.0) == 0.0) {

gl_FragColor = vec4(0.0, 0.0, 0.0, 1.0);

}

}

if (lum < 0.3) {

if (mod(gl_FragCoord.x - gl_FragCoord.y - 5.0, 10.0) == 0.0) {

gl_FragColor = vec4(0.0, 0.0, 0.0, 1.0);

}

}

}

hello world!

There is forming a real mess on my hard drive, so I am gonna use this blog as a sorted archive of game development stuff.

hopefully..

hopefully..

Subscribe to:

Posts (Atom)